Understanding the True Cost of Client-Side A/B Testing

Client-side A/B testing has been a performance loving developer’s worst friend for years.

The way it works is you load in some JavaScript (typically from a third-party domain), and that JavaScript runs, applying the experiments you’re running for any given situation. Since those experiments usually involve changing the display of the page in some way, these scripts are typically either loaded as blocking JavaScript (meaning nothing happens until the JavaScript arrives and gets executed) or they’re loaded asynchronously. If they are loaded asynchronously, they’re usually with some sort of anti-flicker functionality (hiding the page until the experiments run by setting the opacity to 0, for example).

With either approach, you’re putting a pause button in the middle of your page load process. They can wreak havoc on a site’s performance.

People use them, though, partly because of convenience and partly because of cost.

From a convenience perspective, running client-side tests is significantly easier to do than server-side testing. With server-side testing, you need developer resources to create different experiments. With client-side testing, that’s often handled in the form of a WYSIWYG editor, which means marketing can try out new experiments quickly without that developer resources bottleneck.

I’m optimistic about edge computing as a way to solve this. Edge computing introduces a programmable layer between your server or CDN and the folks using your site. It’s simple in concept but very powerful. Moving our testing to the CDN layer lets us run manipulations on the content that the server is providing before they hit the browser.

From an A/B testing perspective, this means A/B testing services can offer the ability to still use a WYSIWYG to set up experiments, but now, instead of having to run all those experiments in the browser, they can use edge computing to apply the experiments at the CDN layer, before the HTML is ever provided to the user. In other words, we get the best of both worlds: we have the convenience provided by WYSIWYG type editing, but the performance benefit that server-side testing has in terms of shifting work out of the browser.

The other reason that I mentioned for why folks use client-side A/B testing is cost. It’s typically much more affordable to use a client-side service than a server-side solution for this. In some cases, like Google Optimize, it’s even free.

But just because the monthly amount you’re making your checks out for (some people still write checks, right?) is low, that doesn’t mean the actual cost to the business isn’t much higher.

Let’s do a cost-benefit analysis to show what I mean, focusing on an unnamed but real-world site using Optimizely for client-side A/B testing. We’re not picking on Optimizely because of anything they do that’s any worse than any other client-side A/B testing solution (all in all, they do pretty well compared to some of the other options I’ve tested), but because of their popularity in the space means this example is likely very relevant to many folks reading this. The tests are all going to be run using WebPageTest, on a Moto G4, over a fast 3G network.

First, let’s look at the impact this Optimizely script has on performance when it’s in place.

The results of the test showed the site having a First Contentful Paint time of 4.4s and a Largest Contentful Paint time of 5.5s.

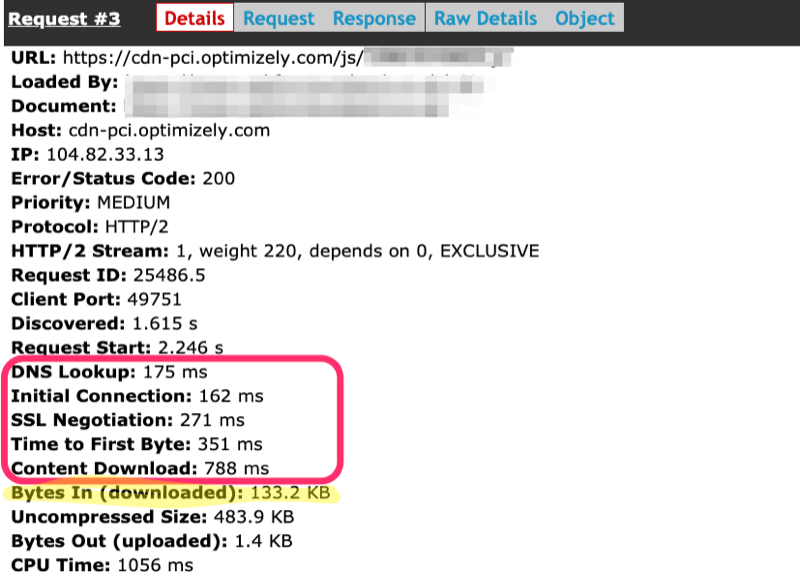

The Optimizely script is 133.2kb, and it’s loaded as a blocking script (meaning, the browser won’t parse any more HTML until it gets downloaded and executed). The total time for the request to complete, including the initial connection to the Optimizely domain, is ~1.7 seconds.

Looking at the request for the Optimizely script in WebPageTest, we see that the file is 133.2kb and it takes around 1.7s to download.

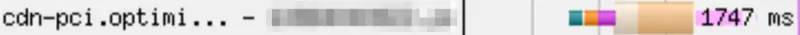

Once it’s downloaded, we see some execution of the script (the pink block following the request).

The pink bars shown after the script have loaded tell us that there’s a large script execution period right after the script has been downloaded, blocking the main thread.

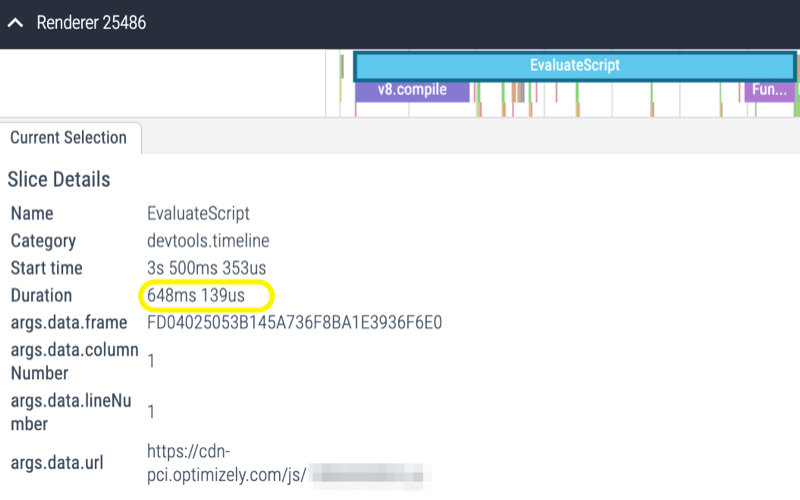

Opening up the timeline that WebPageTest captured we see that immediately after download, the script takes 648ms to execute—continuing to block the main thread of the browser.

Looking at the performance timeline, we can see that the browser spends 648ms evaluating the client-side A/B testing script.

So, between it all, the page is paused from parsing more HTML for around 2.4 seconds.

That doesn’t mean that the actual impact is 2.4 seconds…there’s a lot going on here including some other blocking scripts that certainly contribute to the delay, so the direct impact of the client-side testing may be less significant.

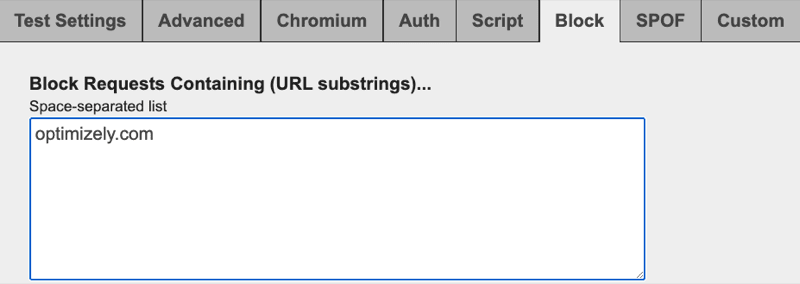

We can test the direct impact using WebPageTest’s blocking feature to block the Optimizely script from loading.

WebPageTest’s blocking feature lets us block all calls to optimizely.com so we can test the impact.

When I did that, the result was a significant improvement in the paint metrics. First Contentful Paint dropped from 4.4s to 2.5s. Largest Contentful Paint dropped from 5.5s to 4.6s.

Ok. So a 900ms delay in our Largest Contentful Paint. Let’s try to put some value to that.

In practice, were you doing this to your own site, you would hopefully have some data about how performance impacts your own business metrics. You would also have access to your actual conversion rate, value per order, monthly traffic, and stuff like that.

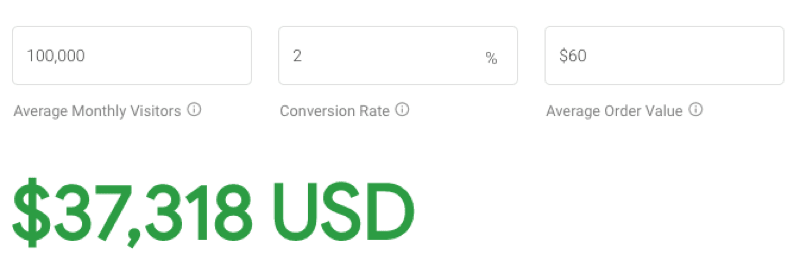

We don’t have that, so we’re going to put together a hypothetical. Let’s say this site gets 100,000 monthly visitors, has a conversion rate of 2%, and an average order value of $60. That puts annual revenue at $1.44 million dollars.

While not perfect, Google’s Impact Calculator is a decent way to guess-timate potential improvements in revenue based on performance. It’s based on Largest Contentful Paint (perfect for our situation) and anonymized data from sites using Google Analytics.

If we plug those numbers in, we see removing that 900ms would garner us an additional $37,318. (Now imagine how big of an impact something like the anti-flicker snippet for Google Optimize, which hides the page for up to 4 seconds, could have on revenue!)

Google’s Impact Calculator tries to estimate how much additional revenue would be earned by improving a site’s Largest Contentful Paint. In our case, eliminating the delay caused by client-side A/B testing would result in an increase of $37,318 over the course of the year.

Now we’re starting to get a picture of the actual cost of that client-side A/B testing solution. The full cost has to factor in both the price we’re paying for the service, as well as the impact it has on the business.

It’s possible the A/B testing tool could still end up benefiting the business in the end. But we’re starting from a bit of a hole. That $37,318 is roughly 2.6% of our annual revenue. Just to get back to zero, we need to find an experiment that is going to at least make up that 2.6% deficit. To come out ahead, we need to be able to find experiments that will have even greater returns. And that doesn’t even cover the monthly cost of the service, which would make our initial hole even larger.

Now that we know the monthly cost as well as the impact on the business, we can start asking some important questions:

- How much does does proxying the request to Optimizely through a CDN (such as Akamai) reduce our total cost?

- Would switching to Optimizely’s Performance Edge or their full-stack solution help reduce the performance impact enough to justify paying potentially higher cost for the service?

- How confident are we that we can create experiments with an impact significant enough to offset our initial deficits?

- Knowing how much of an impact it has, should we disable client-side A/B testing altogether during particularly busy seasons?

A lot of this is hypothetical, I know. This is very much back of a napkin kind of math. In reality, we should be looking at actual traffic. We should be running a test to see the difference on real-user traffic with and without client-side A/B testing. We should also be using our own data to figure out a reasonable expectation for the impact of that service on our conversions and revenue.

But even as just an approximation, it does make a pretty clear case that while client-side A/B testing may be cheaper than a server-side solution, that doesn’t mean it isn’t expensive. Ultimately, folks may decide that for their given situation, client-side A/B testing is worth the cost, but at least by doing this sort of analysis we ensure that decision is one that has been made with a full understanding of what we’re trading off in the process.